- LTX Studio's Extend feature lets you continue any AI video clip from its last frame, building seamless sequences up to 60 seconds without manual stitching or continuity breaks.

- Each extension inherits the lighting, composition, and motion of the previous segment, with duration options from 4–12 seconds per iteration giving you granular creative control.

- The iterative structure means you can review and regenerate individual segments without rebuilding the full sequence — ideal for ads, narrative content, and product showcases.

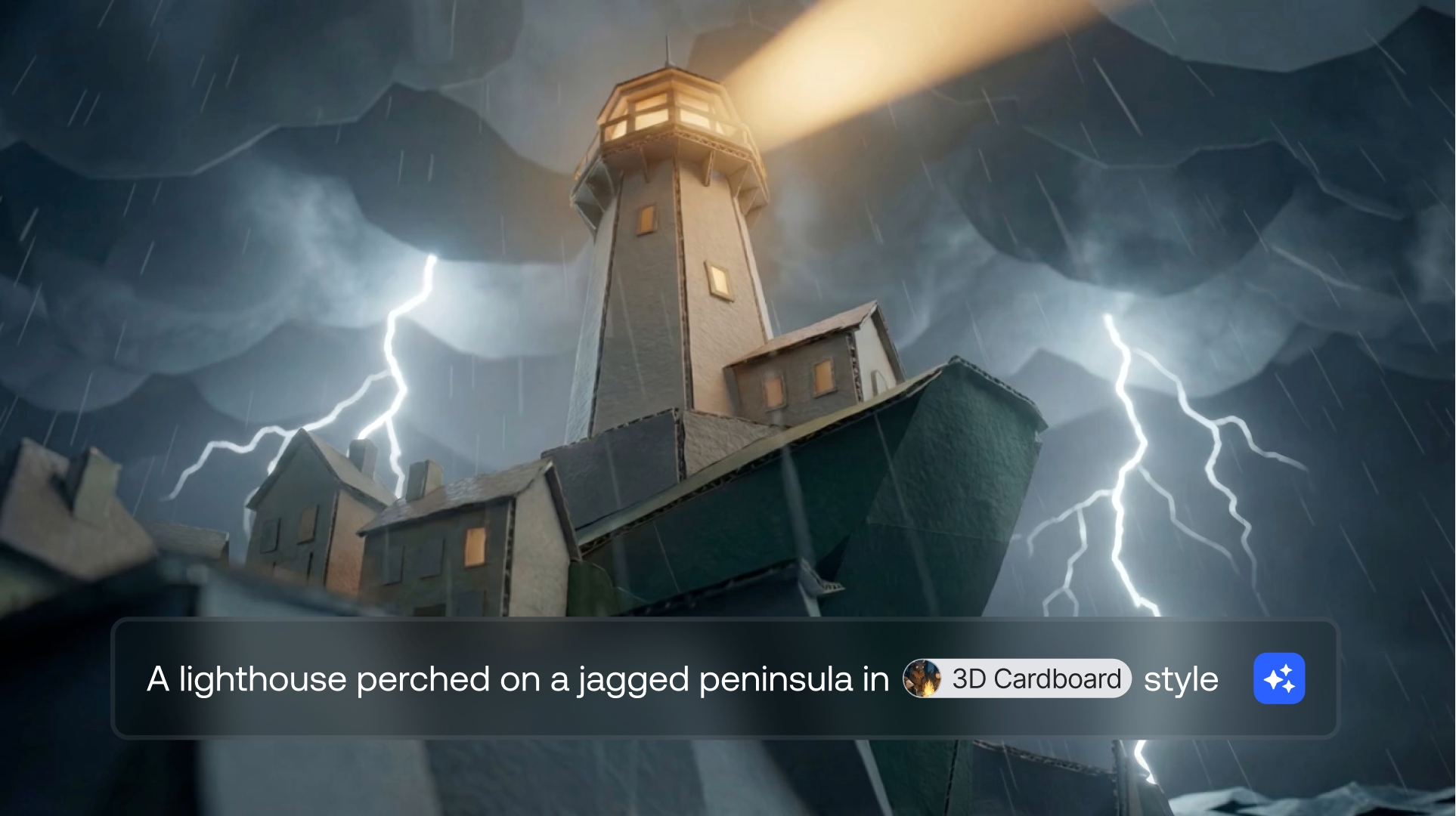

You generated a great four-second clip. The motion is clean, the camera angle works, the subject looks exactly the way you prompted it. But four seconds isn't a scene. It's a fragment. And the moment you try to stretch it into something longer, you're back to stitching clips together manually, fighting continuity breaks between takes, and hoping the transition doesn't look jarring.

This is the core tension with AI video generation today: the output quality has reached production level, but the output length hasn't kept up. Most AI video models generate clips between two and ten seconds. For social content, ads, and narrative sequences, you need more.

That's what the Extend feature in LTX Studio changes. Extend lets you continue an existing video from its last frame, maintaining visual continuity across multiple iterations, up to a total length of 60 seconds. No manual stitching. No separate renders. One continuous sequence built iteratively inside Gen Space.

What Is Video Extension in AI Generation?

Why AI Videos Are Short (and What Changed)

AI video models generate content frame by frame, and the computational cost scales directly with duration. A four-second clip at 24 frames per second is 96 frames. A 60-second clip is 1,440 frames. The memory and processing requirements grow significantly, which is why most models default to short clips.

The traditional workaround has been to generate multiple short clips and stitch them together in an editor. This works, but it creates visible continuity breaks between clips: slight shifts in lighting, color temperature, subject positioning, or camera angle. The result often looks like a montage of separate takes rather than a continuous shot.

Video extension takes a different approach. Instead of generating entirely new clips, it picks up from the last frame of your existing video and generates forward from that visual state. The new frames inherit the lighting, composition, subject position, and motion trajectory of the original clip. The result is a seamless continuation rather than a separate generation.

How Last-Frame Continuation Works

The Extend feature in LTX Studio uses last-frame conditioning. When you extend a video, the model receives the final frame of your current clip as the starting point for the next segment. It then generates new frames that flow naturally from that visual state, guided by your prompt.

This means the generated extension shares the same visual context as the original: same character appearance, same environment, same camera perspective. You can iterate this process multiple times, each extension picking up where the previous one left off, building a progressively longer sequence while maintaining the visual thread throughout.

How to Extend AI Videos with LTX Studio

Step 1: Generate Your Base Video Clip

Start by creating your initial video in Gen Space. Write a prompt describing your scene, select your preferred video model, and generate. This first clip becomes the foundation for your extended sequence. Take time to get this base clip right since every extension builds from it.

Pay attention to the final frame of your base clip. The Extend feature continues from this exact visual state, so a clip that ends with the subject mid-motion or in a dynamic pose gives the extension more to work with than a clip that ends in a static hold.

Step 2: Open Extend in Gen Space

Once your base clip is generated, click on the video to access the Extend option. The feature is available directly within Gen Space, so you don't need to switch to a separate editor or export the clip first. Everything happens in the same workspace where you generated the original.

Step 3: Choose Your Extension Duration

Extend offers multiple duration options for each iteration: 4, 6, 8, 10, or 12 seconds. The choice depends on what's happening in your scene. For fast action or camera movement, shorter extensions (4-6 seconds) give you more control over the pacing. For slower, ambient shots, longer extensions (10-12 seconds) can capture the full arc of the motion without interruption.

Each extension is a separate generation, so you can mix durations across iterations. Start with a 6-second extension, follow it with a 10-second extension, and adjust based on how the scene evolves.

Step 4: Iterate to Build Up to 60 Seconds

The Extend feature supports multiple iterations, up to a total video length of 60 seconds. After your first extension, you can extend again from the new endpoint. Each iteration picks up from the last frame of the previous segment, maintaining the visual continuity chain.

This iterative approach gives you creative control at every step. After each extension, review the result. If the motion veered in a direction you didn't want, you can regenerate that specific extension without losing the earlier segments. Think of it as building your video in blocks, where each block is guided by both your prompt and the visual context of what came before.

Step 5: Review and Refine Your Extended Sequence

Once you've built your sequence to the desired length, play it back in full. Look for any points where the visual continuity breaks or where the motion doesn't flow naturally between segments. If a specific extension doesn't work, regenerate just that segment. The iterative structure means you can refine individual blocks without rebuilding the entire sequence.

Tips for Better Extended AI Videos

Prompting for Continuity Across Extensions

The prompt you write for each extension matters. While the visual context carries forward from the last frame, the prompt guides what happens next. Be specific about the action or motion you want in each segment. Instead of repeating the same prompt, describe the next beat of the scene: "The character turns to face the camera" or "The camera slowly pans right to reveal the landscape."

Avoid contradicting the visual state of the previous segment. If your last frame shows the subject facing left, don't prompt for them facing right unless you want a visible turn. The model will try to reconcile the prompt with the last-frame conditioning, and conflicting instructions can create awkward transitions.

Choosing the Right Extension Length per Iteration

Shorter extensions (4-6 seconds) are better for scenes with complex motion, multiple subjects, or frequent camera changes. They give you more decision points and reduce the risk of visual drift accumulating over a long generation.

Longer extensions (10-12 seconds) work well for establishing shots, slow camera movements, and atmospheric scenes where consistency is easier to maintain. They also reduce the total number of iterations needed to reach your target length, which streamlines the workflow.

A practical approach: start with shorter extensions for the first few iterations while you establish the visual direction, then switch to longer extensions once the scene is stable.

Maintaining Visual Consistency in Long Sequences

Visual consistency across a 60-second AI-generated sequence requires attention at each iteration. Review every extension before moving to the next. Small inconsistencies in lighting or color temperature can compound over multiple iterations, making the end of the sequence look noticeably different from the beginning.

If you notice drift happening, regenerate the extension with a more specific prompt that anchors the visual properties. Describing the lighting conditions, time of day, or color palette explicitly in your prompt can help the model maintain consistency across longer sequences.

Creative Use Cases for Extended AI Videos

Long-Form AI Video for Marketing and Ads

Most digital ad formats require 15 to 60 seconds of video. With Extend, you can build a complete ad sequence from a single starting generation, maintaining visual consistency throughout. Generate the opening hook, extend into the product showcase, and continue into the closing CTA without switching tools or stitching clips manually.

Extended Sequences for Storytelling and Narrative

Narrative content depends on continuity. A character walking through a scene, a camera tracking across an environment, a conversation unfolding in real time: these need more than four-second fragments. Extend lets you build these sequences iteratively, maintaining character appearance and environment consistency across the full duration.

Combined with LTX Studio's storyboard generator, you can plan your narrative arc visually, then use Extend to build each scene to its full length.

Building Demo Reels and Product Showcases

Product showcase videos benefit from smooth, uninterrupted camera work: orbiting around a product, zooming in on details, pulling back to show context. Extend enables these continuous camera movements by generating each segment of the motion as a continuation of the previous one, creating the effect of a single, choreographed shot.

Extend vs Other Approaches to Longer AI Videos

Extend vs Stitching Separate Clips

Manual stitching involves generating individual clips separately and joining them in a video editor. While this gives you maximum control over each clip, the trade-off is visible seams between segments. Lighting can shift, subject positioning can jump, and the overall sequence lacks the continuity of a single shot.

Extend addresses this by maintaining last-frame continuity. Each segment inherits the visual state of the previous one, which means transitions are seamless by default. The trade-off is that you have less control over radical changes between segments since each extension is anchored to what came before.

Extend vs Regenerating at Longer Durations

Some models allow you to set a longer duration upfront. The drawback is that longer single generations are more likely to drift visually over time, and you have no control over what happens at specific points in the sequence. If second 15 looks great but second 20 goes off track, you have to regenerate the entire clip.

The iterative approach of Extend gives you a checkpoint after every segment. You review, approve, and then continue. This granular control means you can catch and correct issues early rather than discovering them after a full-length generation.

Start Building Longer AI Videos

The gap between a compelling four-second clip and a usable video has been one of AI video generation's most practical limitations. Extend closes that gap by giving you a structured, iterative way to build sequences up to 60 seconds long, with visual continuity maintained at every step.

For marketers producing ad content, studios developing narrative sequences, or agencies building demo reels, the ability to extend AI video without losing consistency changes what's producible. You're no longer limited to fragments. You can build full scenes.

Ready to try it? Open LTX Studio, generate your base clip in Gen Space, and start extending. See how far 60 seconds takes your next project.

.webp)

.png)